😼 Impact of Model Characteristics

Model Scale

Model Scale Analysis on AnesBench

📌 Conclusion 1.1:

Model performance is strongly positively correlated with model scale, yet this relationship exhibits diminishing marginal returns.

📌 Conclusion 1.2:

Compared to System 1, the performance gains achieved by increasing model scale on System 2 are significantly lower.

Model Scale Analysis on AMCQA

📌 Conclusion 1.3:

Consistent with findings on AnesBench, Chinese AMCQA also demonstrates a positive correlation between model performance and scale, exhibiting diminishing returns. However, the distinction between system1,system1.x and system2 is not pronounced, potentially due to dataset's limited differentiation in difficulty levels.

Language Transferability

Language Transferability Analysis

📌 Conclusion 1.4:

Language transfer ability remains critical for multilingual model performance in anesthesia reasoning. We recommend continued pre-training to address the deficiency in domain-specific knowledge across languages at the stable language ability stage.

Model’s CoT Length

CoT Length Analysis

📌 Conclusion 1.5:

Models that achieve superior performance on System2 questions often engage in longer CoT reasoning processes.

😺 Impact of Training Strategies and Reasoning Techniques

Training Strategies

Training Strategies Analysis

📌 Conclusion 2.1:

Continuous pre-training (CPT), by adapting models to domain-specific data prior to fine-tuning, yields significant performance gains.

📌 Conclusion 2.2:

Combining factual and problem-solving data reveals that strong reasoning relies on both declarative and procedural knowledge.

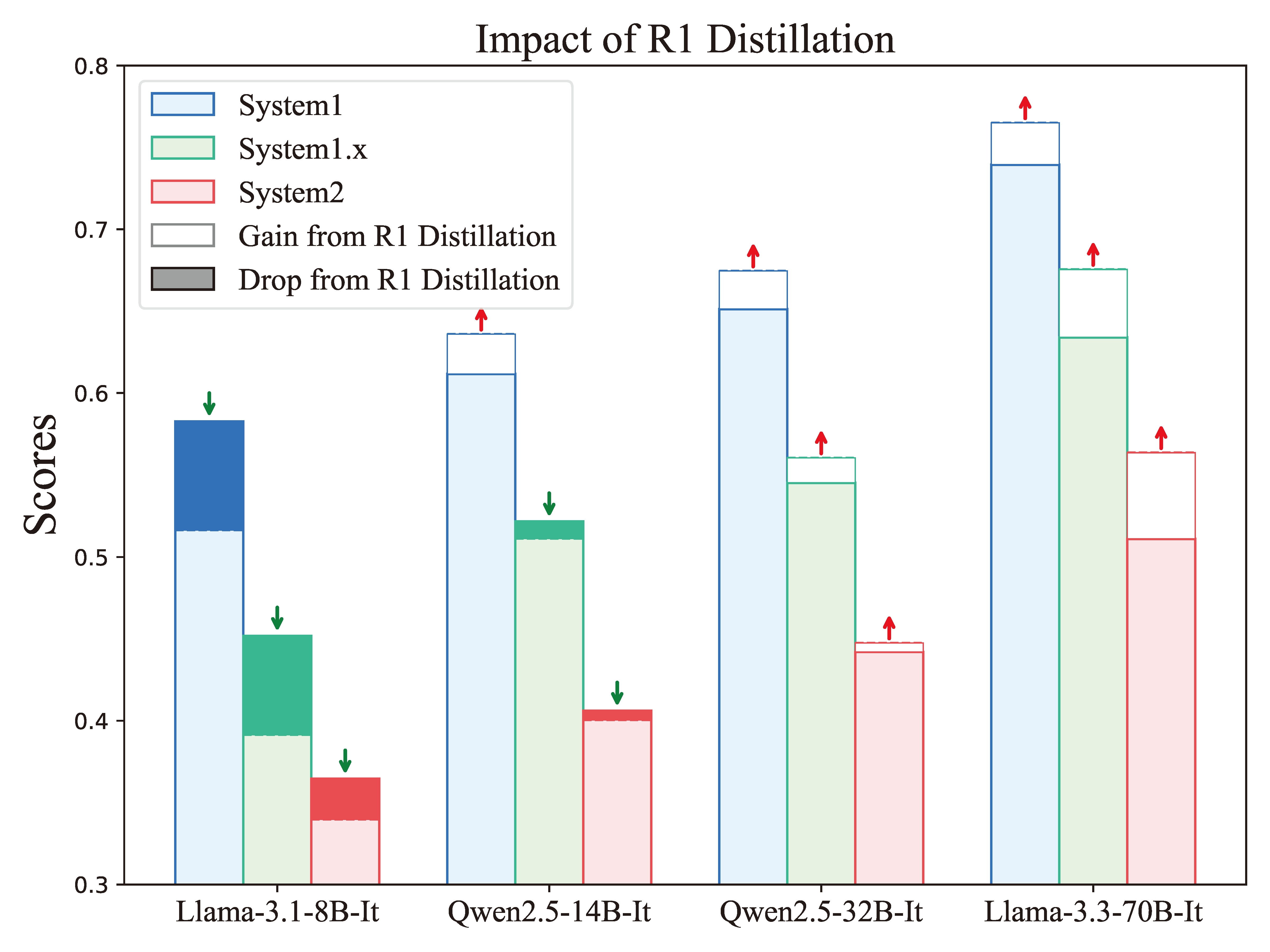

R1 Distillation

Distillation Analysis

📌 Conclusion 2.3:

Reasoning skills distilled from diverse tasks transfer well to niche domains, suggesting reasoning is more generalizable than domain knowledge.

📌 Conclusion 2.4:

Larger models better absorb complex reasoning, highlighting the key role of model capacity in learning structured thinking.

Reasoning Strategies

Reasoning Strategies Analysis

📌 Conclusion 2.5:

Test-time strategies that invest more compute to explore alternative reasoning paths can yield meaningful performance gains.